[ad_1]

It’s usually mentioned that enormous language fashions (LLMs) alongside the strains of OpenAI’s ChatGPT are a black field, and definitely, there’s some reality to that. Even for information scientists, it’s tough to know why, at all times, a mannequin responds in the best way it does, like inventing information out of complete fabric.

In an effort to peel again the layers of LLMs, OpenAI is growing a instrument to robotically determine which elements of an LLM are accountable for which of its behaviors. The engineers behind it stress that it’s within the early levels, however the code to run it’s obtainable in open supply on GitHub as of this morning.

“We’re attempting to [develop ways to] anticipate what the issues with an AI system will probably be,” William Saunders, the interpretability workforce supervisor at OpenAI, instructed TechCrunch in a telephone interview. “We wish to actually be capable to know that we will belief what the mannequin is doing and the reply that it produces.”

To that finish, OpenAI’s instrument makes use of a language mannequin (sarcastically) to determine the features of the parts of different, architecturally easier LLMs — particularly OpenAI’s personal GPT-2.

OpenAI’s instrument makes an attempt to simulate the behaviors of neurons in an LLM.

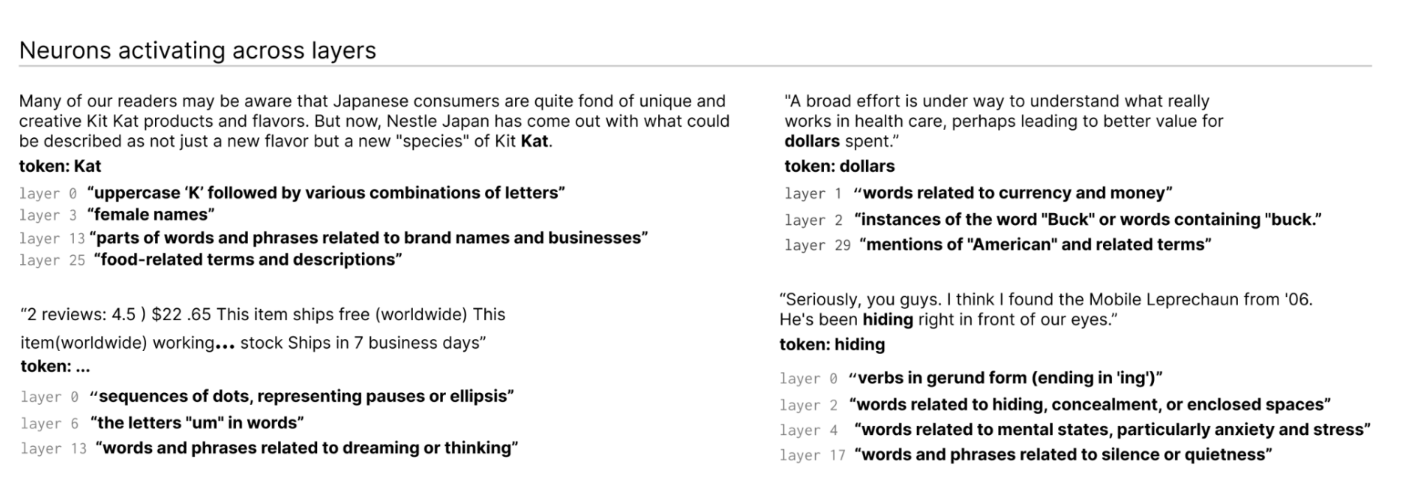

How? First, a fast explainer on LLMs for background. Just like the mind, they’re made up of “neurons,” which observe some particular sample in textual content to affect what the general mannequin “says” subsequent. For instance, given a immediate about superheros (e.g. “Which superheros have probably the most helpful superpowers?”), a “Marvel superhero neuron” would possibly increase the chance the mannequin names particular superheroes from Marvel motion pictures.

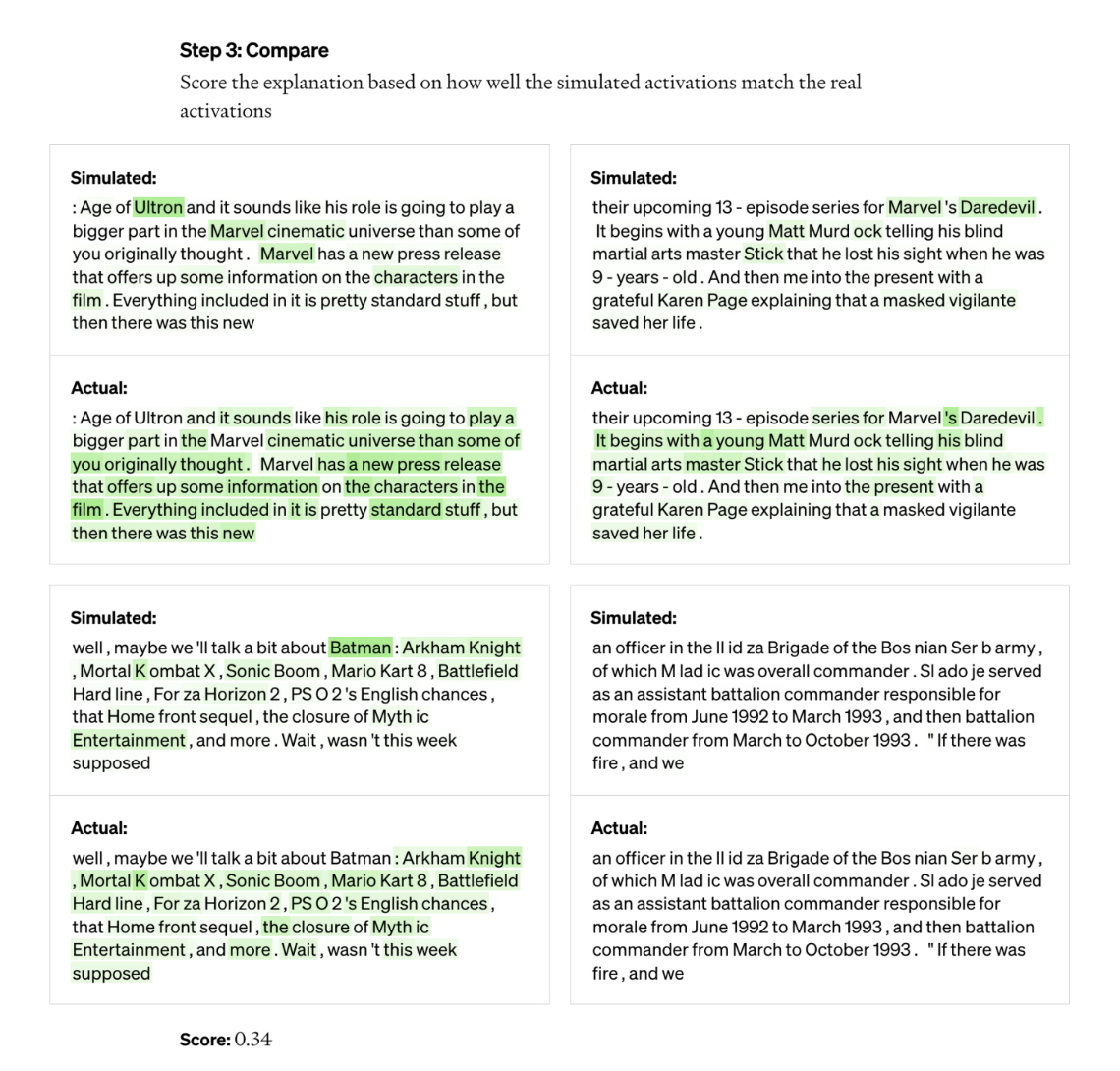

OpenAI’s instrument exploits this setup to interrupt fashions down into their particular person items. First, the instrument runs textual content sequences via the mannequin being evaluated and waits for circumstances the place a specific neuron “prompts” regularly. Subsequent, it “exhibits” GPT-4, OpenAI’s newest text-generating AI mannequin, these extremely lively neurons and has GPT-4 generate an evidence. To find out how correct the reason is, the instrument gives GPT-4 with textual content sequences and has it predict, or simulate, how the neuron would behave. In then compares the conduct of the simulated neuron with the conduct of the particular neuron.

“Utilizing this system, we will principally, for each single neuron, give you some type of preliminary pure language clarification for what it’s doing and now have a rating for a way how nicely that clarification matches the precise conduct,” Jeff Wu, who leads the scalable alignment workforce at OpenAI, mentioned. “We’re utilizing GPT-4 as a part of the method to provide explanations of what a neuron is searching for after which rating how nicely these explanations match the truth of what it’s doing.”

The researchers have been capable of generate explanations for all 307,200 neurons in GPT-2, which they compiled in an information set that’s been launched alongside the instrument code.

Instruments like this might sooner or later be used to enhance an LLM’s efficiency, the researchers say — for instance to chop down on bias or toxicity. However they acknowledge that it has a protracted strategy to go earlier than it’s genuinely helpful. The instrument was assured in its explanations for about 1,000 of these neurons, a small fraction of the whole.

A cynical particular person would possibly argue, too, that the instrument is actually an commercial for GPT-4, on condition that it requires GPT-4 to work. Different LLM interpretability instruments are much less depending on business APIs, like DeepMind’s Tracr, a compiler that interprets applications into neural community fashions.

Wu mentioned that isn’t the case — the actual fact the instrument makes use of GPT-4 is merely “incidental” — and, quite the opposite, exhibits GPT-4’s weaknesses on this space. He additionally mentioned it wasn’t created with business functions in thoughts and, in idea, may very well be tailored to make use of LLMs apart from GPT-4.

The instrument identifies neurons activating throughout layers within the LLM.

“A lot of the explanations rating fairly poorly or don’t clarify that a lot of the conduct of the particular neuron,” Wu mentioned. “A variety of the neurons, for instance, lively in a manner the place it’s very exhausting to inform what’s happening — like they activate on 5 – 6 various things, however there’s no discernible sample. Typically there’s a discernible sample, however GPT-4 is unable to seek out it.”

That’s to say nothing of extra advanced, newer and bigger fashions, or fashions that may browse the net for info. However on that second level, Wu believes that net looking wouldn’t change the instrument’s underlying mechanisms a lot. It might merely be tweaked, he says, to determine why neurons determine to make sure search engine queries or entry explicit web sites.

“We hope that this may open up a promising avenue to deal with interpretability in an automatic manner that others can construct on and contribute to,” Wu mentioned. “The hope is that we actually even have good explanations of not simply not simply what neurons are responding to however general, the conduct of those fashions — what sorts of circuits they’re computing and the way sure neurons have an effect on different neurons.”

[ad_2]

Source link